Note: The creation of this article on testing Focus Not Obscured (Minimum) was human-based, with the assistance of artificial intelligence.

Explanation of the success criteria

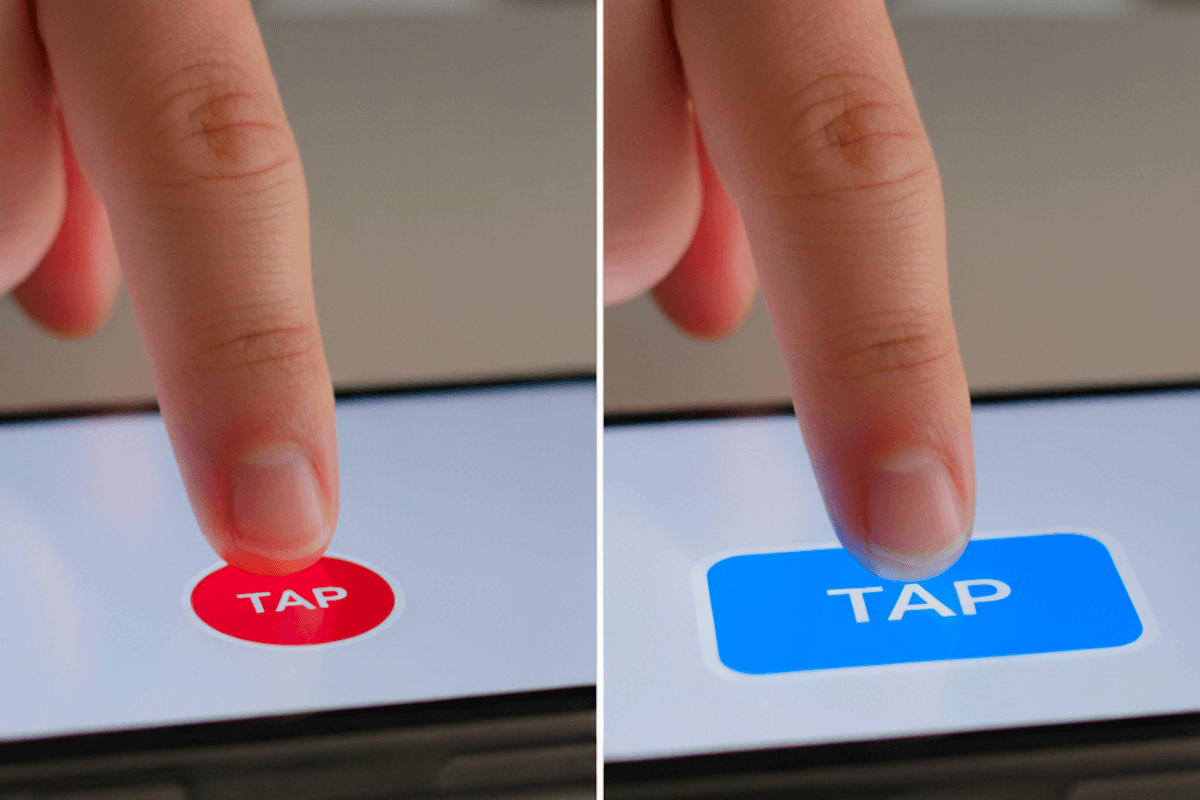

WCAG 2.4.11 Focus Not Obscured (Minimum) is a Level AA conformance level Success Criterion. It ensures one simple yet critical truth: users must always be able to see where they are on the screen. For anyone navigating with a keyboard, switch device, or voice input, the visible focus indicator—often a border, outline, or highlight, is the key to understanding what element is currently active.

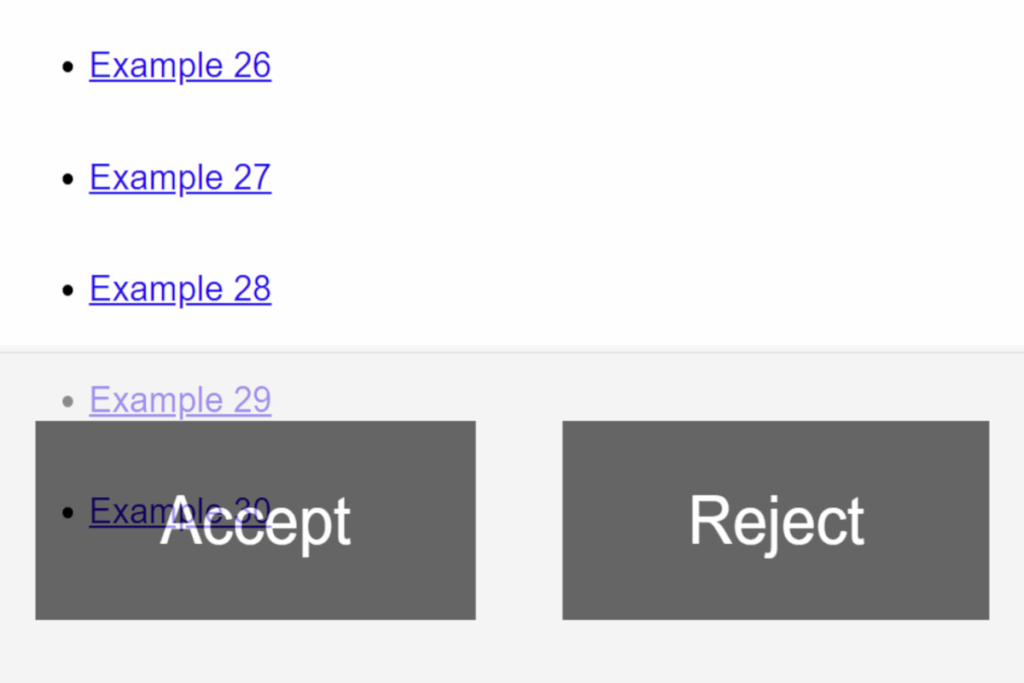

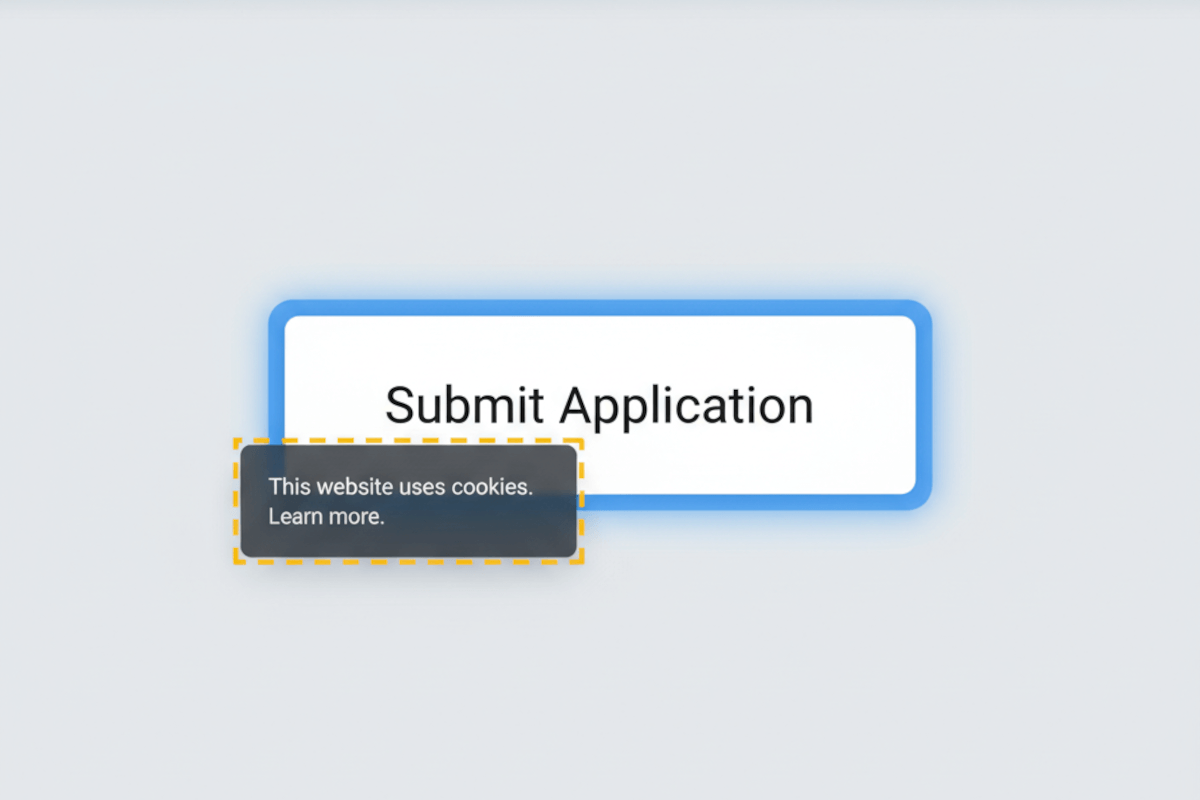

This criterion addresses a growing challenge in modern web design. As interfaces become more dynamic, with sticky headers, pop-up modals, floating toolbars, and responsive elements, it’s increasingly easy for these components to unintentionally hide the focus indicator. Even the most elegant focus style loses its value if it’s blocked from view.

To conform, the focused element must remain at least partially visible within the viewport. Developers should ensure that layouts, overlays, and scrolling behaviors respect this visibility, maintaining a consistent, predictable experience. The result is more than compliance, it’s confidence for users navigating complex interfaces, preserving both orientation and flow.

Who does this benefit?

- Keyboard-only users – Always know where they are when navigating without a mouse.

- People with motor disabilities – Experience uninterrupted navigation when using switches, voice input, or alternative controls.

- Users with low vision – Avoid losing track of focus behind sticky headers or pop-ups.

- Screen magnifier users – Maintain orientation even when viewing a limited portion of the screen.

- People with cognitive disabilities – Reduce confusion through consistent visual feedback and predictable focus movement.

- All users – Gain a smoother, more intuitive experience, especially on sites with modern, fixed-position layouts.

Testing via Automated testing

Automated testing provides the essential first pass, fast, scalable, and systematic. Automated tools can detect common structural issues, such as fixed headers or off-screen elements that may interfere with focus visibility. They help pinpoint risk areas across large digital properties, making them invaluable early in the testing process.

However, automation has its limits. These tools analyze code, not perception. They can’t determine whether a focus indicator is actually visible to a user. Dynamic overlays, responsive breakpoints, and conditional states often hide focus in ways that static code checks can’t detect. As a result, automation alone may overlook subtle but significant user barriers.

Testing via Artificial Intelligence (AI)

AI-based testing advances accessibility testing beyond static analysis by mimicking how users visually experience content. Through image recognition, visual modeling, and simulated navigation, AI can detect when a focus indicator is partially or completely hidden on-screen. It bridges a critical gap, connecting code-level issues with real visual impact.

Yet, even AI has boundaries. Complex animations, transient overlays, or custom focus behaviors can confuse algorithms. The effectiveness of AI depends heavily on its training data and how well it has been tuned to recognize diverse interface patterns. While not infallible, AI-based testing provides valuable context and efficiency, surfacing visibility issues that automation alone would miss.

Testing via Manual Testing

Manual testing remains the gold standard for verifying compliance and usability. Skilled testers can navigate interfaces using a keyboard, experience the page as a real user would, and confirm whether focus indicators are genuinely perceivable and practical. They can also evaluate partial visibility, a nuance that automated and AI systems cannot accurately interpret.

Manual testing is time-intensive, but its value is unmatched. It captures real-world interactions, confirms whether the visual feedback feels natural and usable, and ensures that users aren’t left guessing where their focus has gone.

Which approach is best?

No single method can fully capture the intent of WCAG 2.4.11 Focus Not Obscured (Minimum). Real accessibility assurance comes from a hybrid testing strategy, one that integrates the precision of automation, the intelligence of AI, and the insight of human expertise. This layered approach reflects how users actually experience digital environments: dynamically, visually, and contextually.

Start with automation to establish a foundational understanding of where focus visibility risks are most likely to occur. Automated scans can efficiently surface recurring code patterns and layout structures, such as fixed headers, off-screen elements, or modal overlays, that tend to cause focus obstructions. By running these scans continuously in CI/CD pipelines, teams can identify and prevent regressions early in the development lifecycle, ensuring focus visibility remains a built-in quality standard rather than an afterthought.

Then bring in AI-based testing to elevate analysis from static code to visual reality. AI can simulate rendered experiences, detect when focus indicators are obscured by other layers, and assess visibility across varying device viewports and interaction states. This helps uncover issues automation alone misses, such as partial overlaps, transitional animations, or responsive design changes that hide focus under certain conditions. AI’s ability to interpret context rather than code adds a predictive dimension, allowing accessibility teams to identify not just where problems exist, but where they are likely to occur.

Finally, rely on manual testing to validate what technology cannot fully interpret: the user experience itself. Skilled accessibility testers can observe focus behavior across real interactions, tabbing through menus, navigating modals, or switching between devices, to confirm that focus indicators remain perceivable, consistent, and helpful. Manual testing exposes subtleties that even advanced AI may miss, such as indicators that technically meet minimum contrast or visibility but still fail to communicate focus meaningfully to users.

Together, these three layers, automation for detection, AI for interpretation, and manual testing for validation—create a continuous, data-informed feedback loop. This hybrid strategy doesn’t just check boxes for compliance; it ensures that focus visibility contributes to a coherent, usable, and inclusive experience. When applied consistently, it transforms WCAG 2.4.11 from a technical requirement into a measurable expression of design maturity and user respect, empowering every user to navigate with clarity and confidence.