Note: The creation of this article on testing Focus Appearance was human-based, with the assistance of artificial intelligence.

Explanation of the success criteria

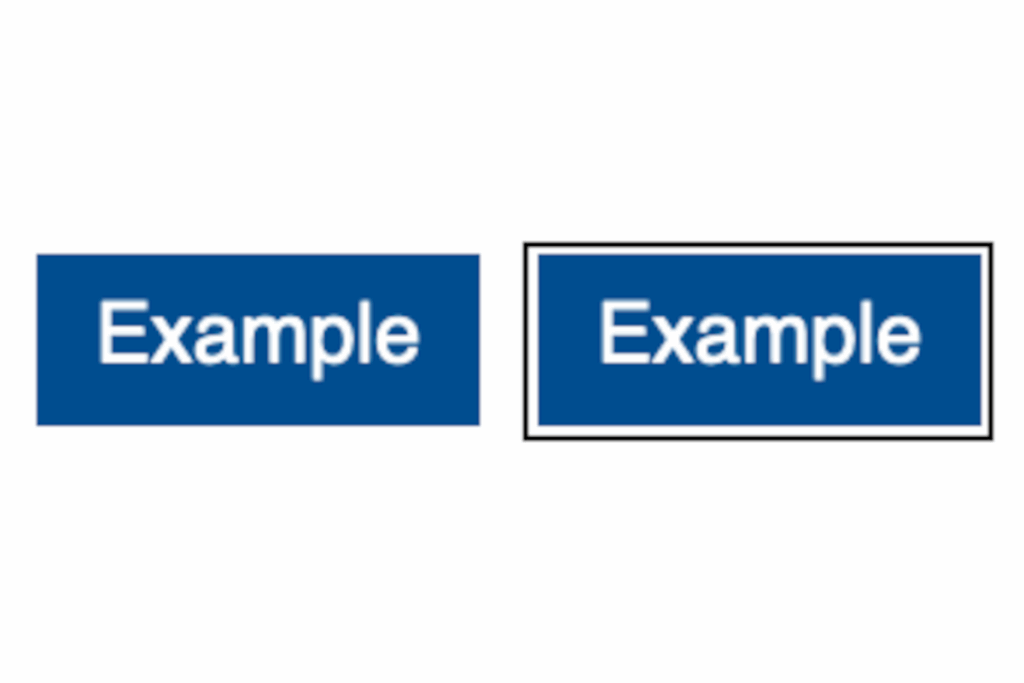

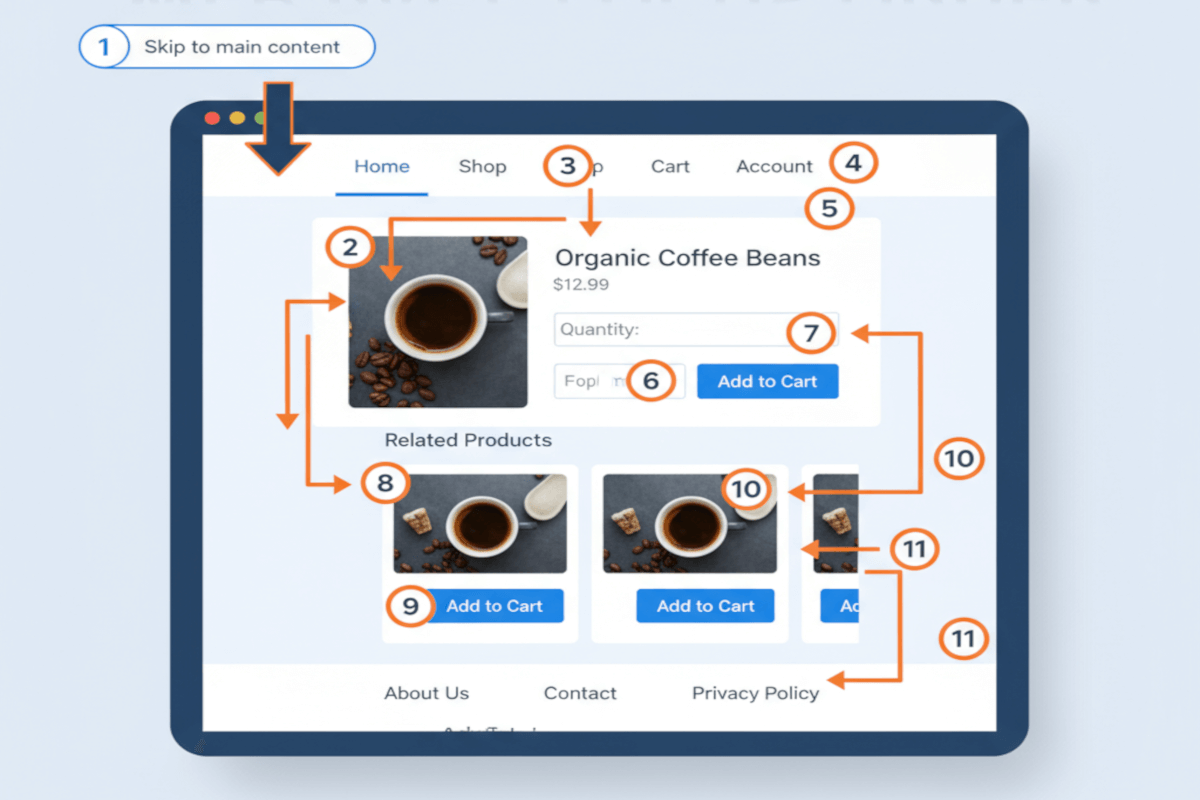

WCAG 2.4.13 Focus Appearance is a Level AAA conformance level Success Criterion. It ensures that users can clearly perceive which element currently holds keyboard focus. While seemingly straightforward, this requirement addresses a critical aspect of user experience: visibility and clarity of interactive elements. Focus indicators, whether borders, outlines, shadows, or other visual cues, act as a guide, helping users navigate content effectively, especially those who rely on keyboards, alternative input devices, or assistive technologies.

This criterion emphasizes not only the presence of focus indicators but also their clarity, contrast, and distinctiveness, ensuring that the focused element stands out from surrounding content. By making focus visually apparent, organizations can enhance usability and accessibility for individuals with low vision, cognitive challenges, motor impairments, or those using screen magnifiers, empowering them to interact with digital experiences confidently and independently.

Although Level AAA is considered aspirational rather than mandatory, pursuing compliance with 2.4.13 sends a strong message: accessibility is more than a checkbox, it’s a strategic commitment to inclusivity. Organizations embracing this standard demonstrate leadership in creating digital experiences that are equitable, usable, and human-centered.

Who does this benefit?

- Keyboard-only users – Clear focus indicators allow users navigating without a mouse to know which element is active.

- Low-vision users – High-contrast focus cues make it easier to track movement across interfaces.

- Users with cognitive or attention-related challenges – Distinct visual indicators reduce confusion and help maintain context.

- Motor-impaired users – Those using alternative input devices can confirm selections and interactions.

- Screen magnifier users – Visible focus ensures elements remain perceivable at high zoom levels.

Testing via Automated testing

Automated excels at speed and scalability, rapidly scanning large codebases to identify missing focus indicators, improper implementation, or deviations from standard patterns. This makes it particularly valuable in CI/CD pipelines, where frequent changes require continuous validation. By flagging structural and technical issues early, automated testing helps development teams maintain a baseline level of accessibility across dynamic, evolving products.

However, automation has inherent limitations. It cannot evaluate the perceptual quality of focus indicators, such as visual clarity, contrast, or distinctiveness in context. Custom or non-standard designs may pass automated checks but fail to provide a meaningful focus experience to real users, highlighting the need for complementary testing methods.

Testing via Artificial Intelligence (AI)

AI-based testing introduces a more nuanced layer of analysis, simulating human visual perception to evaluate how focus indicators appear across diverse devices, screen sizes, zoom levels, and color schemes. AI can detect subtle issues that automation often misses, including low-contrast indicators, overlapping elements, or focus styles that blend into complex backgrounds. By approximating real-world visual experience, AI testing bridges the gap between raw technical compliance and perceptible usability.

Despite its sophistication, AI testing is not infallible. Its effectiveness depends on model accuracy and training, and it may misinterpret highly dynamic content, complex interactions, or unconventional designs. AI should therefore be viewed as a powerful supplement, not a replacement for human judgment.

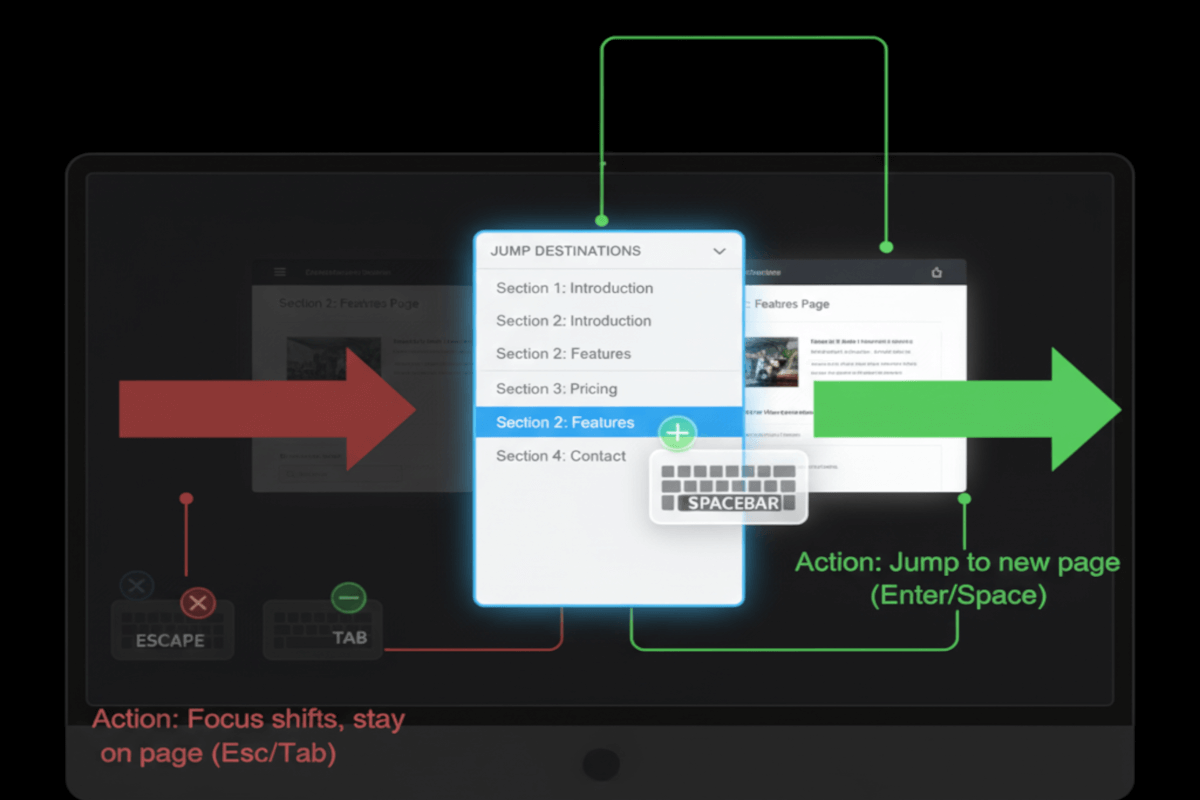

Testing via Manual Testing

Manual testing remains the gold standard for validating focus appearance in real-world contexts. Human testers can navigate interfaces using only keyboards or assistive devices, assessing whether focus indicators are truly perceivable, visually distinct, and contextually meaningful. This includes evaluating dynamic components, interactive widgets, and other scenarios that automation or AI may misread.

The primary drawbacks of manual testing are time, scalability, and susceptibility to human inconsistency. It requires skilled testers and careful planning to ensure coverage, but its insights are irreplaceable, capturing the nuances of human perception and interaction that technology alone cannot replicate.

Which approach is best?

No single method captures the full picture of WCAG 2.4.13 Focus Appearance. A hybrid approach, combining automation, AI, and manual testing, creates a comprehensive framework for verification and validation.

A robust, hybrid approach to testing WCAG 2.4.13 Focus Appearance begins with automated testing as its foundation. Automation excels at scanning entire websites or applications at scale, rapidly detecting missing or improperly implemented focus indicators, highlighting inconsistencies in focus styles, and flagging elements that fail basic accessibility rules. By integrating these checks into CI/CD pipelines, development teams can catch structural and technical issues early, maintain ongoing compliance, and ensure accessibility is part of the workflow rather than an afterthought.

Building on this foundation, AI-based testing introduces a layer of perceptual intelligence. By simulating human vision, AI evaluates whether focus indicators are not only present but truly visible and distinguishable across devices, screen sizes, zoom levels, and color schemes. AI can uncover subtle usability issues often overlooked by automation, such as low-contrast indicators, overlapping elements, or visual cues that blend into complex backgrounds, providing a more context-aware, realistic assessment of user experience.

The final and most critical layer is manual testing, where human evaluators navigate interfaces using keyboards or assistive technologies to validate that focus indicators are perceivable, consistent, and meaningful in practical scenarios. Manual testing captures nuances that technology cannot, including dynamic content, custom widgets, and context-specific interactions, ensuring that accessibility is not just compliant but genuinely usable for real people.

By integrating automated, AI-based, and manual testing, organizations achieve a holistic, multi-dimensional assessment of focus appearance. Automation ensures efficiency and coverage, AI adds perceptual depth and context, and manual evaluation confirms real-world usability, together creating a reliable, user-centered approach that elevates accessibility from a checklist to a strategic commitment to inclusive design.

Related Resources

- Understanding SC 2.4.13 Focus Appearance

- mind the WCAG automation gap

- A guide to designing accessible focus indicators

- Avoid Default Browser Focus Styles

- Focus appearance – testing version 3

- Focus visible testing

- Using an author-supplied, visible focus indicator

- Creating a two-color focus indicator to ensure sufficient contrast with all components

- Creating a strong focus indicator within the component