Note: The creation of this article on testing Focus Not Obscured (Enhanced) was human-based, with the assistance of artificial intelligence.

Explanation of the success criteria

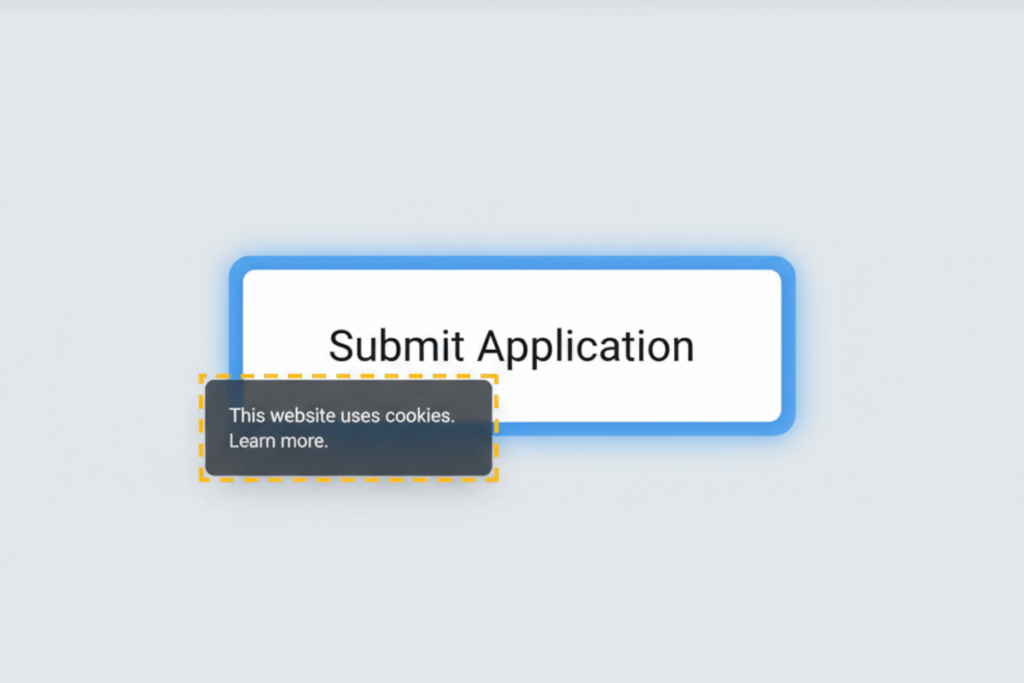

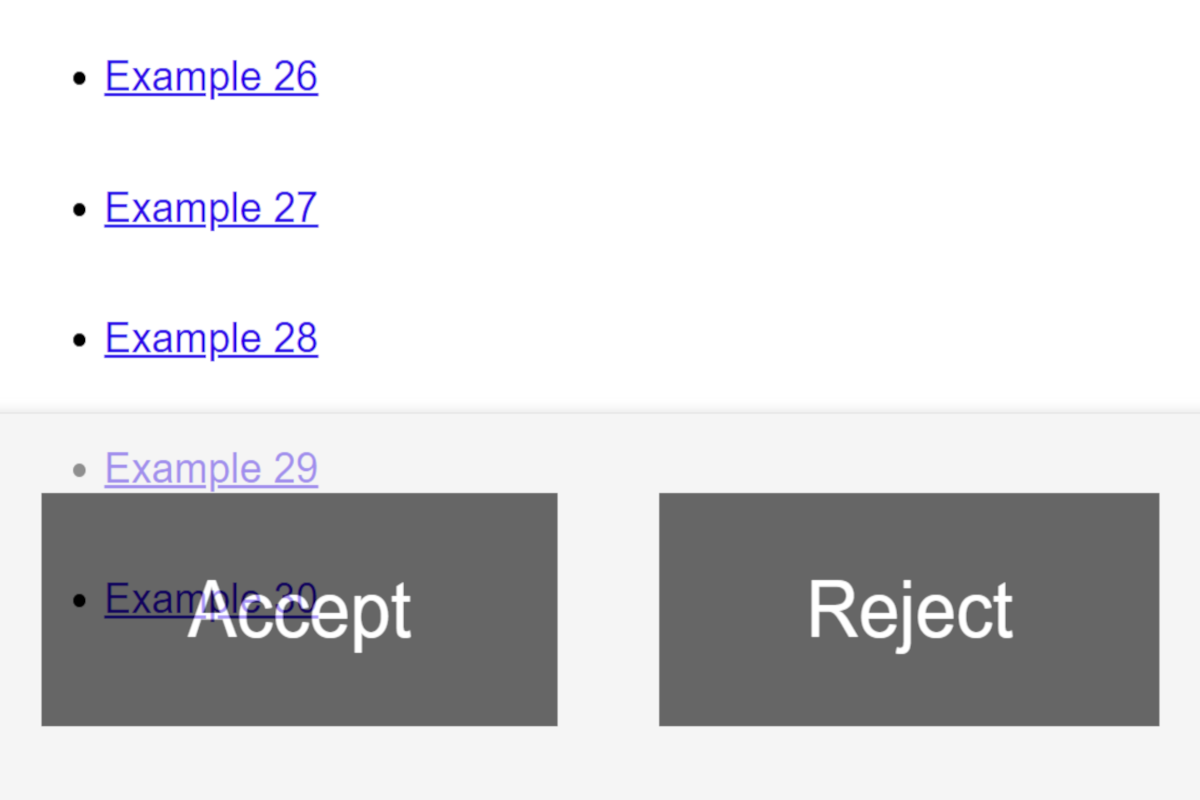

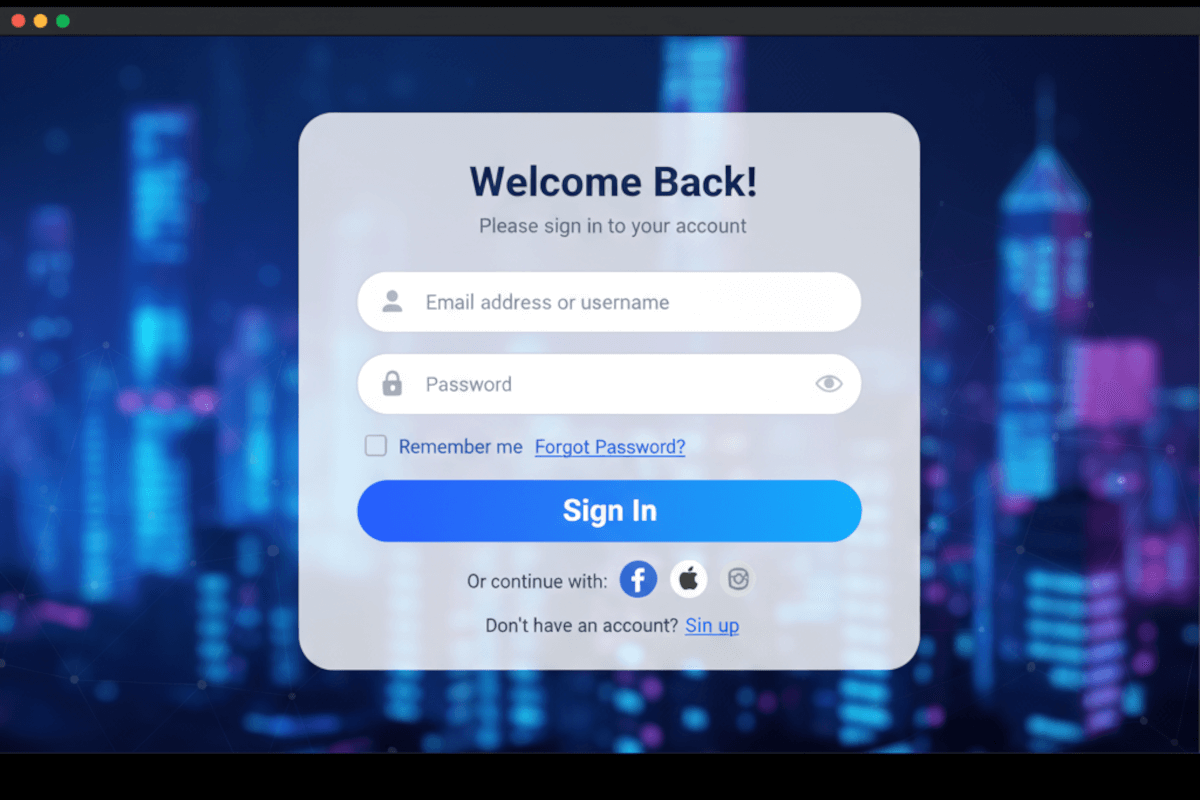

WCAG 2.4.12 Focus Not Obscured (Enhanced) is a Level AAA conformance level Success Criterion. It ensures that when an element receives keyboard focus, it remains entirely visible within the viewport, not partially hidden, not tucked behind a sticky header, and not buried beneath an overlay or modal.

This builds upon WCAG 2.4.11 Focus Not Obscured – Minimum, which only requires that some part of the focus indicator remain visible. The Enhanced version raises the bar: it demands complete focus visibility, eliminating ambiguity and ensuring users can always perceive where they are within the interface.

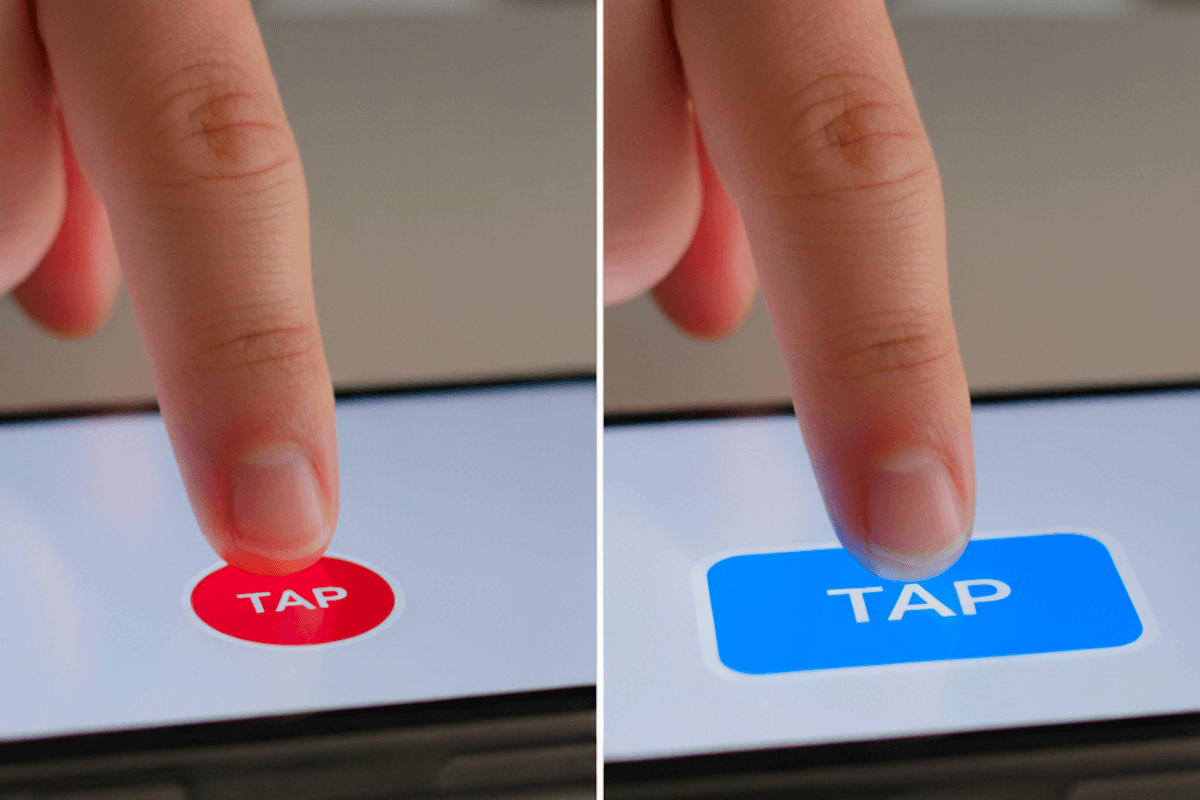

For anyone who navigates using a keyboard, this clarity is not optional, it’s foundational. Losing sight of focus breaks orientation, interrupts task flow, and undermines confidence. Even a small obstruction can cause frustration or disorientation, especially for users with motor or visual disabilities.

But the true power of this criterion lies beyond technical compliance. It embodies a philosophy of intentional design, where clarity, feedback, and inclusivity are built into every interaction. Striving for Level AAA standards signals that an organization sees accessibility not as a checkbox, but as a mark of quality, empathy, and digital maturity.

Who does this benefit?

- Keyboard-only users: Always know where focus is, ensuring smooth, predictable navigation without a mouse.

- Users with motor disabilities: Depend on visible focus cues to confirm successful interactions with buttons, links, and inputs.

- Low-vision users:Use magnification or zoom and benefit from full focus visibility, preserving context and reducing cognitive load.

- Users with cognitive disabilities: Gain reassurance and comprehension through consistent, visible feedback during navigation.

- Assistive technology users with custom focus indicators: Rely on unobstructed layouts so personalized focus enhancements remain effective.

- All users in complex interfaces: Benefit from stable focus behavior within dynamic content, modals, or sticky UI elements.

At its core, 2.4.12 helps everyone. Visibility builds trust. Predictability reduces friction. When users can see exactly where they are, they engage with confidence.

Testing via Automated testing

Automated testing provides scale and speed. It can quickly scan thousands of pages to identify structural risk factors, such as fixed-position headers, sticky footers, or elements with overflow: hidden, that may cause focus to be obscured. These scans are invaluable for maintaining consistency across large codebases and preventing regressions during development.

However, automation hits its limits quickly for this criterion. It can’t interpret visual layering, real-time rendering, or dynamic positioning. A focus element might be technically visible in the DOM yet visually hidden on the screen. Automated results should be viewed as signals, not verdicts, pointers to where deeper investigation is needed.

Testing via Artificial Intelligence (AI)

AI-based testing bridges the gap between automation and perception. Using computer vision, visual diffing, and interaction simulation, AI tools can detect when a focus indicator becomes hidden or partially covered. They analyze focus movement, screen rendering, and scrolling behavior to provide insight closer to real user experience. AI excels at spotting visual patterns automation misses, like modals that appear but fail to move focus, or sticky headers that clip focus outlines on smaller screens. It adds valuable context, helping testers understand how focus behaves across various devices and resolutions.

Yet, AI is not infallible. It may misinterpret custom focus styles, struggle with animations, or overlook subtle dynamic behavior. It brings contextual intelligence, but still relies on human review for interpretation and confirmation.

Testing via Manual Testing

Manual testing remains the cornerstone of accessibility verification for WCAG 2.4.12 because it brings the human experience directly into the evaluation process. Unlike automation or AI, human testers navigate as real users do, tabbing through interfaces, observing how focus shifts and elements come into view, and verifying that nothing slips beneath sticky headers, hidden panels, or overlapping content. This hands-on approach reveals the subtle issues that technology alone cannot capture: sticky navigation elements that partially obscure focus, dialogs that trap or misplace it, and scrollable regions that fail to bring newly focused components into view. Each of these scenarios affects usability in ways that defy code analysis or visual simulation.

The challenge, of course, is scalability, manual testing requires time, discipline, and expertise.

Which approach is best?

Testing WCAG 2.4.12 Focus Not Obscured (Enhanced) requires more than verifying code or rendering, it demands confirming that focused elements remain fully visible under real-world interaction. No single testing method can capture that level of nuance. A hybrid approach, integrating automation, AI, and manual review, provides the most comprehensive and reliable evaluation.

Automated testing forms the foundation by detecting structural patterns that can lead to focus obstruction. Tools can identify fixed or sticky elements, monitor CSS properties like position: fixed, overflow: hidden, or z-index, and flag regions where content may overlap focusable elements. Automation is ideal for scale: it runs continuously in CI/CD pipelines, preventing regressions when layouts or headers change. However, automation’s role is diagnostic, not definitive, it identifies risk areas, not guaranteed failures.

AI-based testing extends this foundation by introducing visual intelligence. By simulating how focus behaves during real navigation, AI models can detect when a focused element is partially or fully obscured. This includes visual diffing between pre-focus and post-focus states, analyzing the position of focus outlines relative to the viewport, and flagging instances where focus indicators fall under fixed layers or off-screen content. AI also helps expose subtle interactions, like when modals open but fail to shift focus properly, or when focus moves beneath sticky components. While AI-based testing adds depth and visual realism, it still benefits from human interpretation to validate complex or animated states.

Manual testing completes the hybrid process by applying human judgment and contextual understanding. Skilled testers use a keyboard to navigate through every interactive component, links, buttons, menus, form controls, modals, and confirm that focus remains entirely visible without obstruction. They evaluate dynamic content, scrolling behavior, and responsive layouts, ensuring the experience is consistent across viewports and devices. Manual testing also validates intent: confirming that the user experience is logical, perceivable, and frustration-free.

When these three layers work together, they create a powerful testing ecosystem. Automation identifies structural risks early, AI exposes visual obstructions under real conditions, and manual testing ensures usability and conformance. The result is not just compliance with WCAG 2.4.12, but genuine accessibility, interfaces that respect focus visibility, maintain orientation, and support every user who navigates by keyboard.